Why Most AI Marketing Initiatives Fail: The Missing Governance Layer

Strategic Context

AI investment in marketing is accelerating. Tools are being deployed. Pilots are running. Budgets are shifting.

Yet measurable, sustained operating impact remains inconsistent.

The failure is rarely technical. It is structural.

Most AI marketing initiatives fail because they are introduced as capability experiments rather than embedded within a defined governance architecture. Without a decision framework, sequencing discipline, and executive oversight model, AI becomes additive noise rather than institutional leverage.

This article examines why AI marketing initiatives stall and outlines the governance layer required to convert experimentation into operating maturity.

The Organizational Reality Behind AI Underperformance

In most enterprise marketing environments, AI adoption begins in one of three ways:

A performance marketing team tests generative content tools.

A RevOps group introduces predictive scoring.

An innovation mandate encourages experimentation across teams.

These efforts are not inherently flawed. The problem emerges when experimentation outpaces structural alignment.

The result is familiar:

Tool sprawl without accountability

Shadow AI usage outside approved systems

Misaligned performance metrics

Confusion around decision rights

Executive skepticism regarding ROI

The organization begins to experience AI as fragmentation rather than acceleration.

What Is AI Marketing Governance?

AI marketing governance is the structured oversight framework that defines how AI initiatives are prioritized, approved, sequenced, monitored, and evaluated within a marketing organization.

It establishes:

Decision rights

Risk controls

Capability sequencing

Performance accountability

Escalation paths

Without governance, AI activity becomes decentralized experimentation. With governance, AI becomes an operating capability.

Governance is not bureaucracy. It is decision architecture.

The Five Structural Reasons AI Marketing Initiatives Fail

1. Tool-First Adoption Instead of Model-First Design

Organizations adopt AI tools before defining how AI integrates into the broader marketing operating model. Tools become tactical patches rather than strategic enablers.

2. Undefined Decision Authority

No clarity exists regarding who can approve AI use cases, allocate budget, or define success metrics. Diffused authority leads to stalled initiatives.

3. Lack of Diagnostic Sequencing

AI initiatives are launched without assessing organizational readiness, data integrity, workflow maturity, or risk exposure. Execution begins before constraints are understood.

4. Absence of Risk Controls

Few organizations define guardrails for data governance, compliance exposure, model bias, brand risk, or performance volatility. As risk incidents emerge, executive confidence declines.

5. No Operating Cadence for Oversight

AI initiatives lack structured review cycles, performance audits, or escalation protocols. What begins as momentum becomes unmanaged drift.

These are structural failures, not technical ones.

The Governance Reframing: From Experimentation to Architecture

AI should not be introduced as a tool layer. It must be embedded within a structured operating system.

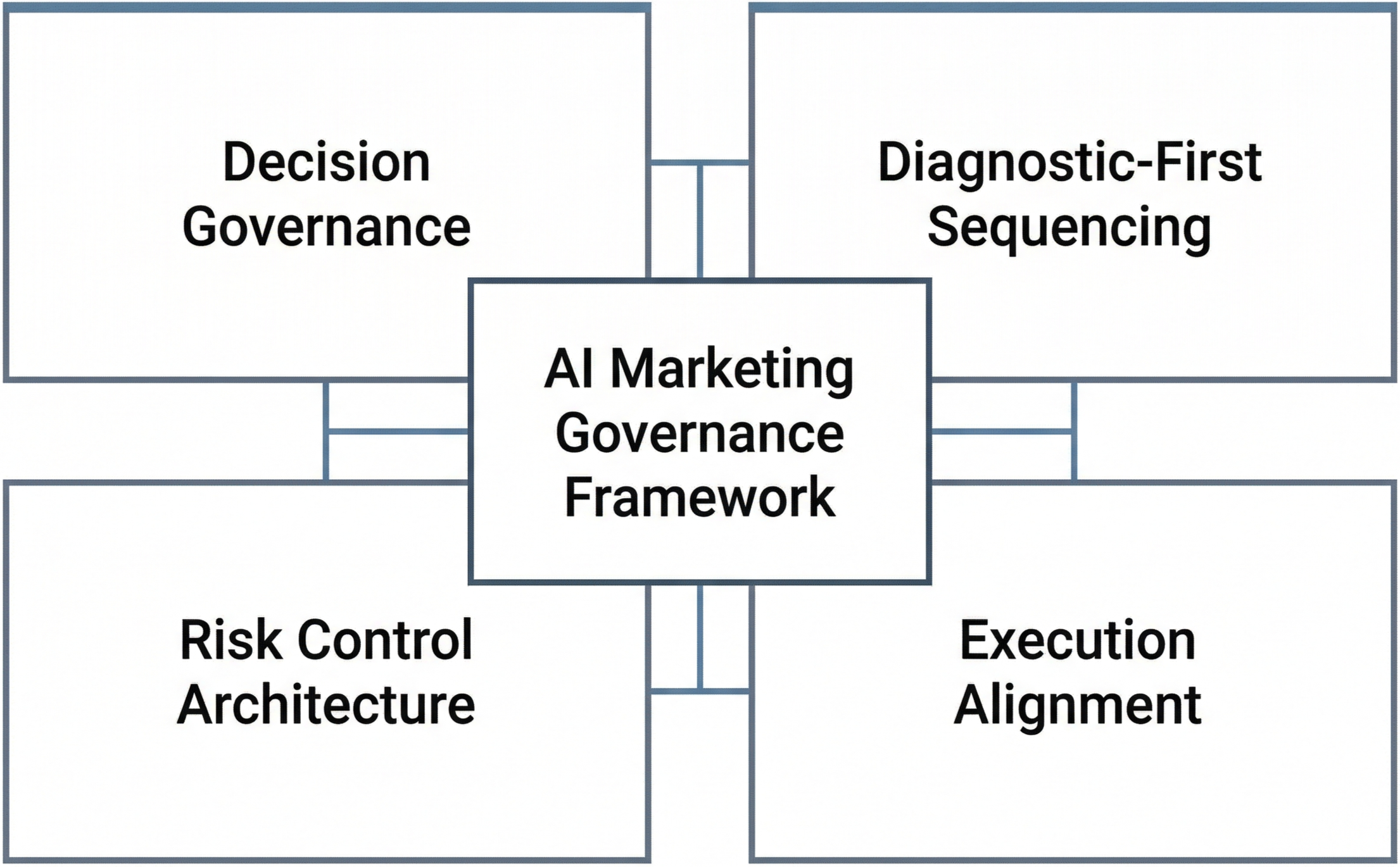

A governance-based AI marketing framework includes four components:

1. Decision Governance

Define ownership for AI prioritization, budget allocation, risk approval, and performance evaluation.

2. Diagnostic-First Sequencing

Assess readiness before implementation. Evaluate data quality, workflow maturity, measurement standards, and compliance exposure prior to deployment.

3. Execution Alignment

Ensure AI initiatives map to defined growth priorities, not opportunistic experimentation.

4. Risk Control Architecture

Establish guardrails, monitoring standards, escalation pathways, and auditability protocols before scaling AI initiatives.

This is the difference between experimentation velocity and operating maturity.

What This Means for CMOs and Executive Leaders

AI is no longer an innovation conversation. It is a structural accountability conversation.

For CMOs and CROs, the executive questions shift:

Do we have a defined AI decision authority model?

Are AI initiatives sequenced against organizational readiness?

Are risk controls codified before scaling?

Is AI performance audited with the same rigor as channel investment?

If the answer to these questions is unclear, the organization does not have an AI capability. It has isolated experiments.

When to Conduct an AI Governance Diagnostic

An AI governance diagnostic is required when:

AI pilots are active but executive confidence is declining

Teams are experimenting independently

Measurement inconsistencies are emerging

Compliance concerns are being raised post-implementation

Budget discussions are occurring without structural clarity

In these situations, governance must precede expansion.

Execution should follow architecture, not the reverse.

Decision Rights Architecture in AI Marketing Governance

Governance cannot exist without explicit decision rights.

In AI marketing environments, four distinct decision domains must be defined:

1. Investment Authority

Who can approve AI investment?

At what threshold does executive approval become mandatory?

What financial exposure requires escalation?

2. Prioritization Authority

Who determines which AI initiatives proceed first?

Are use cases evaluated against strategic growth objectives or operational convenience?

3. Risk Authorization

Who has authority to accept data, compliance, or brand risk?

Are risk tolerances defined formally or assumed implicitly?

4. Performance Accountability

Who defines success criteria?

Who evaluates performance against governance standards rather than vendor metrics?

Without defined ownership across these four domains, governance remains rhetorical.

Executive Oversight Cadence

AI governance must operate within a defined review structure. This includes:

• Formal initiative approval checkpoints

• Scheduled governance reviews

• Risk reassessment intervals

• Performance evaluation cycles

• Escalation pathways when variance occurs

Governance without cadence becomes documentation.

Governance with cadence becomes institutional discipline.

Governance Maturity Levels

Organizations typically move through three governance conditions:

Ad Hoc Governance

Informal oversight, undefined decision rights, reactive risk management.

Defined Governance

Explicit ownership, documented sequencing, structured review cycles.

Institutionalized Governance

Governance integrated into budgeting, strategic planning, and executive reporting frameworks.

AI maturity is not possible without governance maturity.

Executive Summary

Most AI marketing initiatives fail due to structural governance gaps.

Tool adoption without operating design creates fragmentation.

Undefined decision rights stall momentum.

Lack of diagnostic sequencing introduces hidden constraints.

Risk controls must be defined before scaling AI capabilities.

Governance converts experimentation into accountable performance.

AI maturity requires decision architecture, not enthusiasm.

AI does not fail because of insufficient ambition.

It fails because organizations attempt acceleration without structure.

Governance is not a constraint on AI performance.

It is the precondition for it.

Related Structural Reads:

• Diagnostic-First AI Adoption: A Structured Alternative to Tool-First Strategy

• AI in Marketing: Who Owns the Operating Model?