Diagnostic-First AI Adoption: A Structured Alternative to Tool-First Strategy

The Structural Problem with Tool-First AI

Most AI adoption in marketing begins with enthusiasm.

A team identifies a promising tool.

A vendor demonstrates performance gains.

A pilot is launched.

The initiative may succeed tactically. It often fails structurally.

Tool-first AI adoption introduces capability before confirming readiness, sequencing authority, or defining governance controls. The result is familiar:

Fragmented experimentation

Inconsistent performance measurement

Hidden risk exposure

Erosion of executive confidence

AI does not fail because the tools are ineffective. It fails because adoption bypasses structural evaluation.

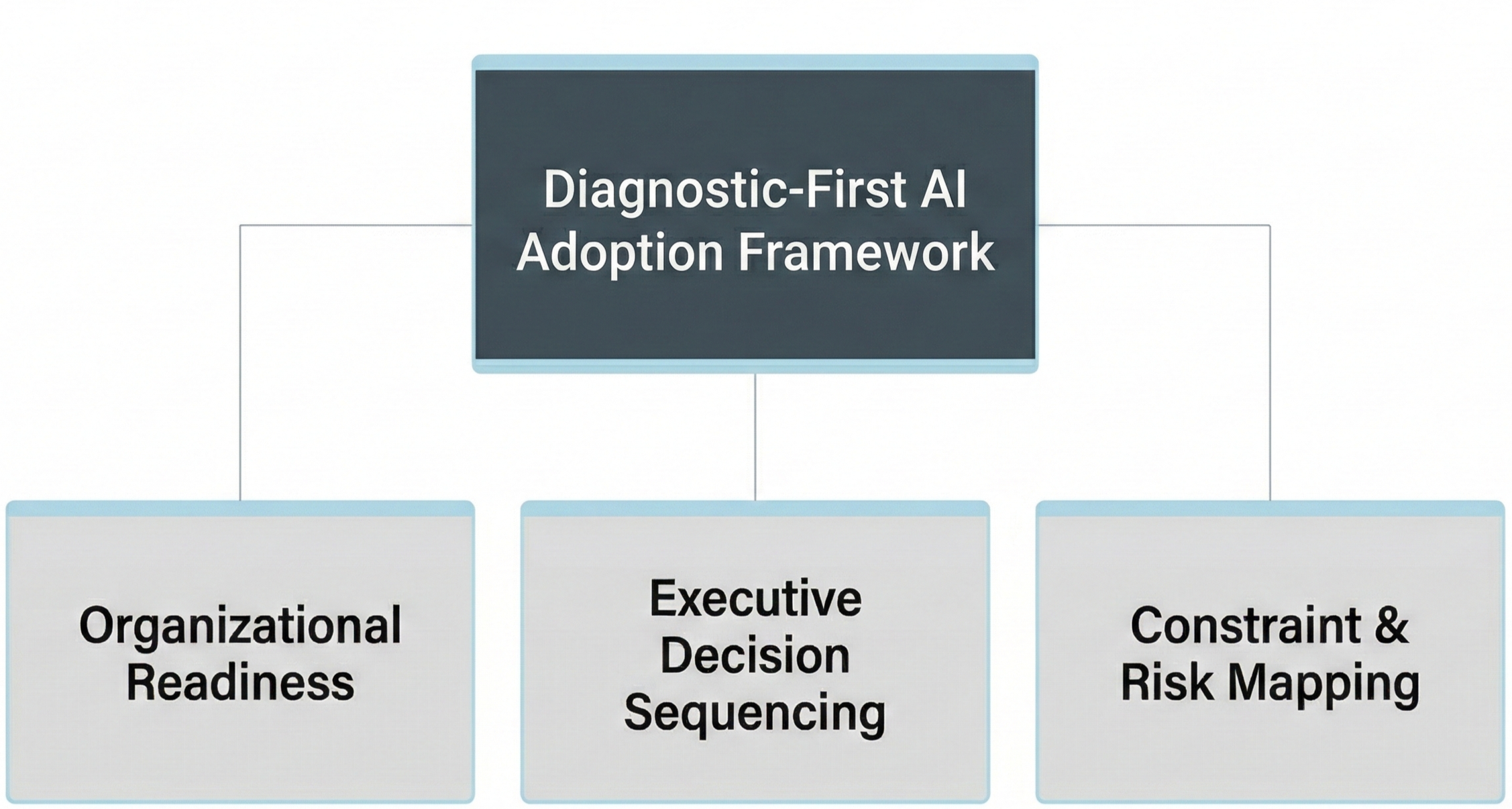

What Is a Diagnostic-First AI Adoption Model?

A diagnostic-first AI adoption model is a governance-based sequencing framework that evaluates organizational readiness, structural constraints, and executive decision alignment before AI capabilities are deployed.

It answers three questions before execution begins:

Are we structurally ready?

Are we sequenced correctly?

Are risks defined and controlled?

This approach shifts AI from experimentation to accountable capability development.

Why Tool-First Strategy Creates Structural Instability

When organizations adopt AI tools without diagnostic sequencing, four predictable patterns emerge:

1. Capability Overreach

AI is deployed in areas where data integrity, workflow maturity, or measurement discipline are insufficient to support it.

2. Decision Ambiguity

No clear ownership exists for prioritization, investment approval, or performance accountability.

3. Unmapped Constraints

Operational bottlenecks, compliance exposure, or integration limits surface only after deployment.

4. Scaling Before Governance

Early success leads to expansion without defined oversight architecture.

These are not implementation errors. They are sequencing errors.

The Three Dimensions of Diagnostic-First AI Adoption

A structured diagnostic framework evaluates three dimensions before AI investment proceeds.

1. Organizational Readiness

Assess:

Data quality and accessibility

Measurement consistency

Workflow maturity

Talent capability

Technology integration health

Readiness determines whether AI accelerates performance or amplifies disorder.

2. Executive Decision Sequencing

Define:

AI investment prioritization criteria

Governance oversight structure

Budget allocation authority

Escalation pathways

Review cadence

Sequencing determines whether AI initiatives align with strategic growth priorities or operate independently.

3. Constraint and Risk Mapping

Identify:

Compliance exposure

Data privacy implications

Brand governance risks

Model volatility and bias concerns

Operational bottlenecks

Constraint mapping prevents risk discovery during scaling.

AI Marketing Readiness Scoring Framework

A diagnostic-first model requires measurable assessment criteria. Readiness cannot remain qualitative.

A structured AI marketing readiness evaluation should score organizations across five dimensions:

1. Data Integrity

Is performance data accessible, reliable, and consistently governed?

Are measurement definitions standardized across teams?

2. Workflow Maturity

Are marketing processes documented and repeatable?

Can AI integrate without disrupting operating stability?

3. Decision Governance

Are AI investment thresholds defined?

Are prioritization criteria explicit?

Is risk ownership assigned?

4. Risk and Compliance Controls

Are data privacy, brand governance, and compliance standards documented?

Are escalation pathways defined before deployment?

5. Executive Alignment

Is there clarity around strategic AI objectives?

Are success criteria tied to business outcomes rather than experimentation metrics?

Each dimension should be evaluated on a structured scale:

• Reactive (Ad Hoc)

• Emerging (Partially Defined)

• Structured (Documented and Governed)

• Institutionalized (Integrated and Measured)

Readiness is determined not by tool availability, but by structural maturity across these five dimensions.

Organizations scoring below “Structured” in multiple dimensions should not scale AI initiatives until structural gaps are addressed.

From Experimentation to Structured Capability Development

Diagnostic-first adoption reframes AI as an operating capability, not a tactical enhancement.

It introduces discipline before deployment.

The sequence becomes:

Evaluate structural readiness

Define decision architecture

Map constraints and risk exposure

Approve sequenced initiatives

Deploy under defined oversight

This approach does not slow innovation.

It prevents compounding instability.

What This Means for CMOs and Executive Leaders

For executive leaders, the central shift is conceptual:

AI should not be evaluated by what it can do.

It should be evaluated by whether the organization is prepared to absorb it.

Before approving AI expansion, leadership should ask:

Has readiness been formally assessed?

Are decision rights defined?

Have constraints been mapped?

Is governance embedded before scaling?

If these conditions are absent, acceleration introduces fragility.

When to Conduct an AI Marketing Diagnostic

A diagnostic assessment is required when:

Multiple AI pilots are underway

Tool sprawl is emerging

Performance attribution is inconsistent

Risk oversight is informal

Executive reporting lacks clarity

Diagnostic sequencing should precede expansion.

Without it, AI remains experimental.

With it, AI becomes structural.

Executive Summary

Tool-first AI adoption creates structural instability.

Diagnostic-first sequencing evaluates readiness before deployment.

Organizational readiness determines whether AI amplifies order or disorder.

Executive decision architecture must precede scaling.

Constraint mapping prevents risk discovery during expansion.

Governance-first sequencing converts AI into an operating capability.

AI maturity is not achieved through faster experimentation.

It is achieved through disciplined sequencing.

Related Structural Reads:

• The AI Marketing Operating Model: Why Tools Alone Do Not Create Maturity

• Measuring AI Marketing Maturity: How CMOs Assess Structural Progress